Apr 13, 2021|

In-depth Report: Dr. Tao Mei: Deep Look at the Industry Through Computer Vision

by Yuchuan Wang

It is currently the harvest season for Dr. Tao Mei, the head of computer vision research and development at JD Technology (JDT) and the director of JD’s computer vision and multimedia lab. The annual computer vision event CVPR (IEEE International Conference on Computer Vision and Pattern Recognition) to be held in June will include 11 papers from JDT, among which six are submitted by Dr. Mei’s team, which has over 50 members, is the champion at JDT in academic competitions.

Tao Mei

Ph.D., IEEE/IAPR Fellow

Vice President of JD.com

Deputy Managing Director of JD AI Research

As an IEEE Fellow, Dr. Mei has distinctly different expectations for engineers and scientists on his team. “Although mingling engineers and scientists might maximize the working efficiency, I would doubt it’s beneficial for deep research and innovation,” said Dr. Mei. In his opinion, engineers should pursue creating standardized products and services to meet the requirements of diversified clients and business scenarios, while scientists should be guaranteed with enough freedom for doing research. “We have a relatively exclusive computer vision lab function in our team, and I hope all the scientists there to do one of two things: be the best, or be the first.”

Dr. Mei joined JD.com in 2018 to lead the research, innovation and application of computer vision technologies. He is a pioneer of large-scale multimedia analysis and applications, having authored or co-authored over 200 publications with 12 best paper awards granted by industry leading institutions including IEEE and ACM (Association for Computing Machinery), and holds over 50 US and international patents.

Prior to JD, he spent twelve years working at Microsoft Research Asia in Beijing, where he contributed over 20 inventions and technologies to Microsoft’s products and services, including the renowned virtual companion chatbot Xiaoice.

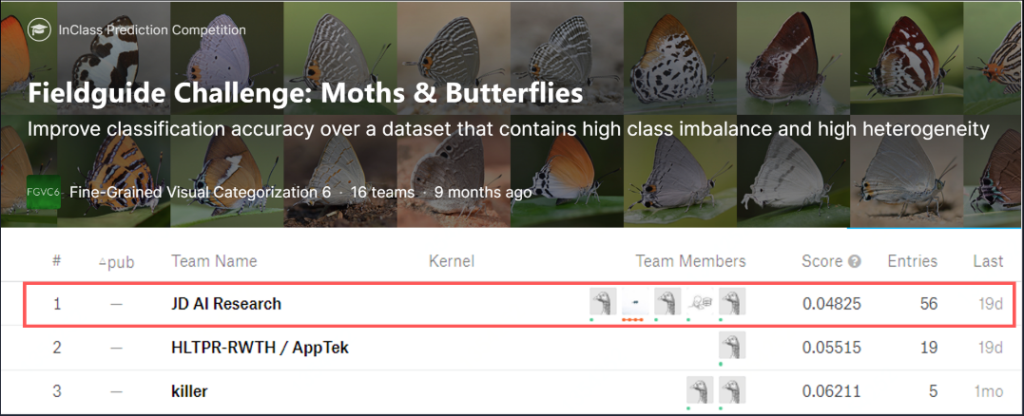

Dr. Mei’s team won first place in the Fieldguide Challenge for moths and butterflies recognition during CVPR 2019

As a field of artificial intelligence (AI) that trains computers to interpret and understand the visual world, computer vision is like “connecting the computer with a camera so it can describe what the camera sees,” said Dr. Mei. For example, there are numerous photos and videos being uploaded by merchants or customers on e-commerce platforms every day. To determine whether this kind of multimedia content is compliant, a lot of auditing work is required, such as whether it contains pornographic or violent information, or whether a product picture meets the design requirements of the platform. Thanks to computer vision, the work can be done automatically by AI. At JD.com, AI processes billions of pictures and videos every day.

“To unleash the power of AI, we need to provide rich application scenarios and training data. JD has the most extensive and diversified retail scenarios, which in turn naturally connects research with the industry. The company’s commitment to long-term technology investment makes me believe AI can create greater influence than ever in the industry.”

Making retail smarter

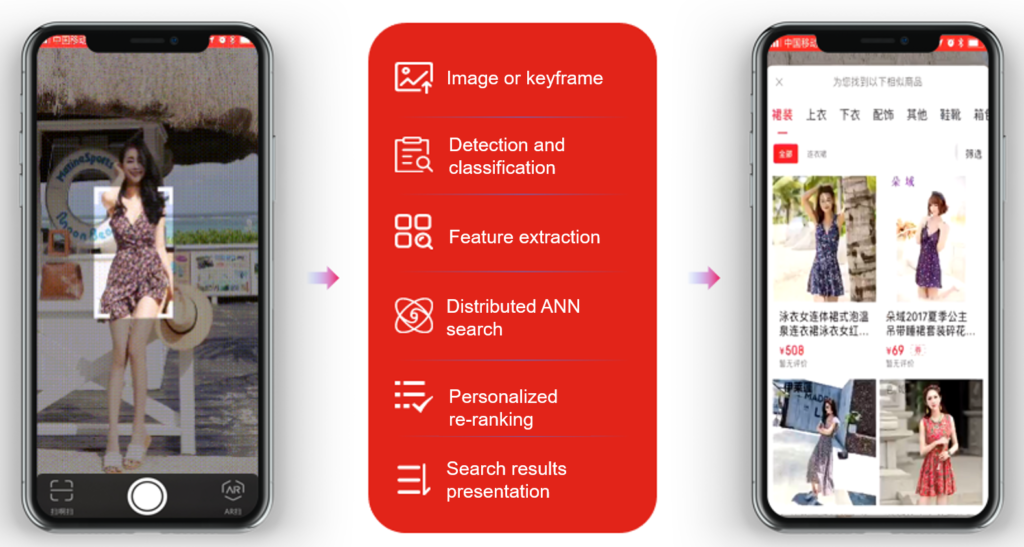

JD’s SnapShop is a ground-breaking application of computer vision in online retail. Previously, when a customer wanted to place an order online, he or she needed to input the exact name or description of the product. That made online shopping challenging for nonstandard product searches, such as for apparel, shoes, and imported products with foreign language descriptions. SnapShop aims to alleviate customers’ effort of searching the exact products they want via AI technologies.

With the adoption of SnapShop, the e-commerce platform can analyze a given photo uploaded by a customer and provide identical or similar product recommendations on the fly. From detecting a bottle of wine on the dinner table, to the distinct elements of a certain clothing outfit and more, SnapShop makes shopping much more convenient.

SnapShop at JD.com

“As of now, the daily sales generated from SnapShop are tens of million RMB, making a great contribution to the e-commerce business, and also significantly enriching customers’ online shopping experiences via multimodal interactions,” said Dr. Mei. “Cell phone manufacturers including Huawei, Vivo, Xiaomi and Samsung have integrated our SnapShop API in their smart phones. For example, you can turn on Vivo’s built-in camera to search products directly on JD.com.” According to Mei, the daily external usage of SnapShop API through JD’s NeuHub Open AI Platform have reached tens of million times.

This technology has also inspired Mei’s team to research the waste sorting function that helps users classify different types of discarded items and how to dispose of them properly since China began requiring trash sorting in communities.

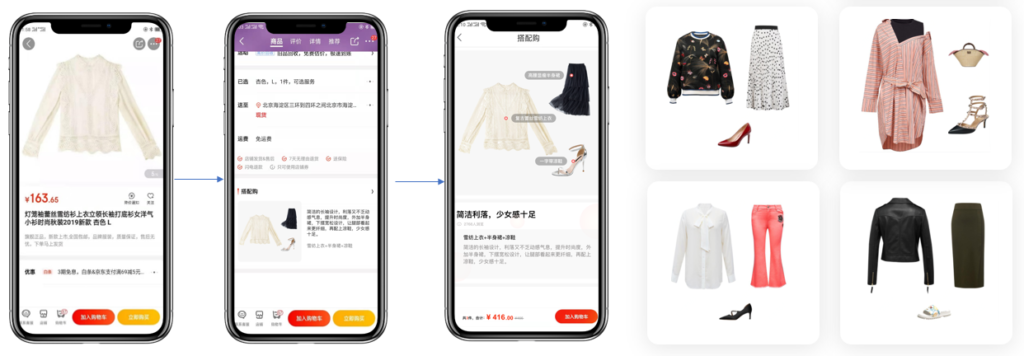

Dr. Mei and his team also integrate AI with aesthetics to enable automated fashion collection recommendations. For instance, when a consumer orders a T-shirt on JD, the app will automatically recommend which bag, shoes, or necklace might be well matched and fashionable to pair with the T-shirt. The analysis is based on the calculation of a large number of fashion pictures.

“We’ve seen that most occasions, the sales conversion rate of a machine-recommended collection is higher than the manually recommended ones,” said Dr. Mei. “This is incredible. We suspect this is probably because a machine does not have a bias in recommending products, but a salesman might have.”

Fashion collection recommendation via AI

Making manufacturing more efficient

“AI is still new to the manufacturing industry, as it is composed of different scenarios with different know-how and requires customized solutions,” said Dr. Mei. The “Industrial Brain” project is to promote the transformation of traditional manufacturing, enhancing the production efficiency while lowering the operation costs.

Although the production processes in many plants have been advanced by automation, the quality assurance (QA) procedure is still labor-intensive. For example: During the QA process of smart phones, a complete examination of the appearance takes at least 20 seconds. Employees inspect the phones closely with magnifying glasses to check if there are any tiny scratches or spots on the surface.

“Many manufacturers tell us that it is becoming even harder to recruit QA staff. Young people, especially Gen Z, are unwilling to take such positions because they are often boring, tiring and seem to lead nowhere.”

The “Industrial Brain” has managed to change the situation. Sensors including cameras simulate the functions of human’s hands, eyes and ears, and AI algorithms simulate the cognitive and execution abilities.

JD’s “industrial brain” working stations for smart phone QA

JD’s “industrial brain” working stations for smart phone QA

Incorporating a robotic arm, optical cameras, and computer vision algorithms, the examination procedure of a cell phone has been reduced to three to four seconds. The robotic arm will first grab the product and take a picture of it. AI will then verify if it is normal or defective. This method can also be used to inspect product packages, such as proofreading the design, font size, etc.

“Workers are no longer inspecting the products directly; they have been transformed and upgraded to being the supervisor and operator of the machines.”

“Further to this scenario, once we’ve identified the common problems with the products, this information can be used to optimize the manufacturing procedure,” said Dr. Mei. “It means that through analysis, we will know if we need to adjust the raw materials or change the production temperature, thus lowering the defect rate.”

Making plants safer

Since 2019, the computer vision algorithms researched by Dr. Mei’s team have been opened up to Chiwan Port through NeuHub, to inspect the operation of the port including vehicles, equipment and personnel to avoid production accidents.

Located in Shenzhen in the Guangdong-Hong Kong-Macao Greater Bay Area, Chiwan Port is one of the world’s fastest-growing container ports, with an annual throughput of more than 8 million tons. On average, there are 3,750 vehicles every day in the port to load, unload and transport goods such as cereals and steel. The frequent interaction among vehicles, equipment and staff workers in the port also poses highly likely hidden dangers during the operation, especially in the processes of transportation, loading and unloading, and warehousing.

Based on the intelligent edge computing powered terminals deployed in the port to collect real-time data, AI will automatically label and identify the image data collected, enabling the combination of monitoring and prediction at the same time. For example, AI can identify various types of vehicles, pedestrians, lanes, guardrails, traffic lights, traffic signs and other targets in front of working vehicles and trigger the early warning of possible accidents such as too-close vehicle distance, forward collision, pedestrian collision, lane departure and other dangers. In addition, leveraging computer vision and millimeter wave radar technologies, the system can alert against the possible “invasion” of people or vehicles in the operation area, so as to avoid collisions in the process of handling large containers, which might result in injuries to personnel and loss of goods.

Since the introduction of the system in November 2019, the accident rate of the vehicles in Chiwan Port has decreased to the zero, an amazing outcome. At the same time, the working efficiency of the port management has been improved by 40%.

Be wild

Although Dr. Mei’s work has become busier and busier, life still provides him with an inexhaustible source of innovation.

Ten years ago, he joined a band as the main vocal. Ten years later, he runs marathon races every year, and goes running every week.

Dr. Mei and his band (left), and Dr. Mei participates Hangzhou Marathon in 2020 (right)

Dr. Mei and his band (left), and Dr. Mei participates Hangzhou Marathon in 2020 (right)

“We need to refresh ourselves and be wild. Life and nature give me more inspiration and lead me to be more creative in my job,” said Dr. Mei, “Life always begins at the end of your comfort zone.”

This Harbin tourism boom has also spurred a surge in sales of winter apparel. JD.com’s data indicates a rapid growth in the sales of warm clothing items such as down jackets, snow boots, and thermal underwear between January 1st and 7th. The sales growth is especially pronounced in southern provinces and cities such as Jiangsu, Zhejiang, Guangdong, Sichuan, and Shanghai. Notably, tall snow boots registered a 206% year-on-year increase in transactions, while padded cotton caps and thickened long down jackets soared by 158% and 134%, respectively. Beyond clothing, travel gear has also seen a considerable uptick, with a 98% year-on-year growth in transactions for large suitcases and travel backpacks in these southern regions.

This Harbin tourism boom has also spurred a surge in sales of winter apparel. JD.com’s data indicates a rapid growth in the sales of warm clothing items such as down jackets, snow boots, and thermal underwear between January 1st and 7th. The sales growth is especially pronounced in southern provinces and cities such as Jiangsu, Zhejiang, Guangdong, Sichuan, and Shanghai. Notably, tall snow boots registered a 206% year-on-year increase in transactions, while padded cotton caps and thickened long down jackets soared by 158% and 134%, respectively. Beyond clothing, travel gear has also seen a considerable uptick, with a 98% year-on-year growth in transactions for large suitcases and travel backpacks in these southern regions. JD’s View: Integrated Supply Chain Solutions Will Accelerate Logistics Digital Transformation

JD’s View: Integrated Supply Chain Solutions Will Accelerate Logistics Digital Transformation